Logicmojo - Updated March 18, 2022

Logicmojo - Updated March 18, 2022

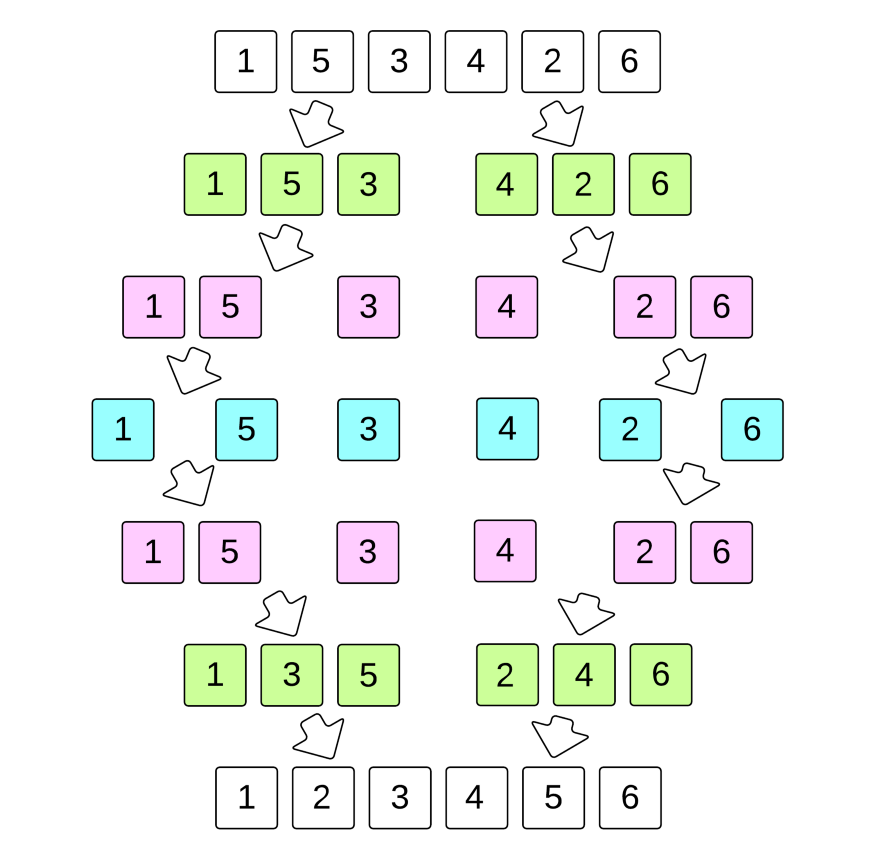

What is Merge Sort? How does it work? Actual recursion vs. what I thought was recursion. An example of Merge Sort. What are the advantages and disadvantages? These types of questions generally come to our mind.

Merge Sort is a sorting algorithm. According to Wikipedia, it was invented by John von Neumann way back in 1945. It is a divide and conquer algorithm. Divide and conquer is an algorithm design pattern wherein you take the input and divide it recursively into subproblems until it is simple enough to solve.

While comparing two sublists for merging, the first element of both lists is taken into consideration. While sorting in ascending order, the element that is of a lesser value becomes a new element of the sorted list. This procedure is repeated until both the smaller sublists are empty and the new combined sublist comprises all the elements of both the sublists.

Merge Sort Algorithms: Steps on how it works:

1. If it is only one element in the list it is already sorted, return.

2. Divide the list recursively into two halves until it can no more be divided.

3. Merge the smaller lists into new list in sorted order.

Below is an image of an array, which needs to be sorted. We will use the Merge Sort Algorithm, to sort this array:

Algorithm

The MergeSort function repeatedly divides the array into two halves until we reach a stage where we try to perform MergeSort on a subarray of size 1 i.e. p == r. After that, the merge function comes into play and combines the sorted arrays into larger arrays until the whole array is merged.

MergeSort(A, p, r):

if p > r

return

q = (p+r)/2

mergeSort(A, p, q)

mergeSort(A, q+1, r)

merge(A, p, q, r)

Characteristics of Merge Sort

1. Merge Sort is useful for sorting linked lists.

2. Merge Sort is a stable sort which means that the same element in an array maintain their original positions with respect to each other.

3. Overall time complexity of Merge sort is O(nLogn). It is more efficient as it is in worst case also the runtime is O(nlogn)

4. The space complexity of Merge sort is O(n). This means that this algorithm takes a lot of space and may slower down operations for the last data sets.

Applications of Merge Sort

1. Merge Sort is useful for sorting linked lists in O(nLogn) time. In the case of linked lists, the case is different mainly due to the difference in memory allocation of arrays and linked lists. Unlike arrays, linked list nodes may not be adjacent in memory. Unlike an array, in the linked list, we can insert items in the middle in O(1) extra space and O(1) time. Therefore, the merge operation of merge sort can be implemented without extra space for linked lists.

2. Inversion Count Problem

3. Used in External Sorting

Implementation in Python

# MergeSort in Python

Implementation in C++

// Merge sort in C++ #include <iostream> using namespace std; // Merge two subarrays L and M into arr void merge(int arr[], int p, int q, int r) { // Create L ← A[p..q] and M ← A[q+1..r] int n1 = q - p + 1; int n2 = r - q; int L[n1], M[n2]; for (int i = 0; i < n1; i++) L[i] = arr[p + i]; for (int j = 0; j < n2; j++) M[j] = arr[q + 1 + j]; // Maintain current index of sub-arrays and main array int i, j, k; i = 0; j = 0; k = p; // Until we reach either end of either L or M, pick larger among // elements L and M and place them in the correct position at A[p..r] while (i < n1 && j < n2) { if (L[i] <= M[j]) { arr[k] = L[i]; i++; } else { arr[k] = M[j]; j++; } k++; } // When we run out of elements in either L or M, // pick up the remaining elements and put in A[p..r] while (i < n1) { arr[k] = L[i]; i++; k++; } while (j < n2) { arr[k] = M[j]; j++; k++; } } // Divide the array into two subarrays, sort them and merge them void mergeSort(int arr[], int l, int r) { if (l < r) { // m is the point where the array is divided into two subarrays int m = l + (r - l) / 2; mergeSort(arr, l, m); mergeSort(arr, m + 1, r); // Merge the sorted subarrays merge(arr, l, m, r); } } // Print the array void printArray(int arr[], int size) { for (int i = 0; i < size; i++) cout << arr[i] << " "; cout << endl; } // Driver program int main() { int arr[] = {6, 5, 12, 10, 9, 1}; int size = sizeof(arr) / sizeof(arr[0]); mergeSort(arr, 0, size - 1); cout << "Sorted array: \n"; printArray(arr, size); return 0; }

Time & Space Complexity Analysis

Merge Sort is quite fast, and has a time complexity of O(n*log n). It is also a stable sort, which means the "equal" elements are ordered in the same order in the sorted list.

As we have already learned in Binary Search that whenever we divide a number into half in every step, it can be represented using a logarithmic function, which is log n and the number of steps can be represented by log n + 1(at most)

Also, we perform a single step operation to find out the middle of any subarray, i.e. O(1). And to merge the subarrays, made by dividing the original array of n elements, a running time of O(n) will be required.

Hence the total time for mergeSort function will become n(log n + 1), which gives us a time complexity of O(n*log n).

Space Complexity: O(n)

With this article at Logicmojo, you must have the complete idea of analyzing Merge Sort algorithm.

Good Luck & Happy Learning!!