Logicmojo - Updated Jan 23, 2023

Logicmojo - Updated Jan 23, 2023

Artificial intelligence has adopted a more ethical stance with the development of machine learning. The most cutting-edge technology designed to address complicated problems involving large data sets is deep learning. You will learn about the fundamental ideas behind neural networks in this blog post on what they are and how they may be used to resolve challenging data-driven issues.

Similar to how the human body's brain is the most fundamental component. The biological neural network is what receives the signals as inputs, processes them, and sends the signals as outputs. The neuron is the basic building block of the brain. ANN is described as "a computing system made up of several simple, highly interconnected processing components, which process information by their dynamic state reaction to external inputs" by Maureen Caudill, an expert in artificial intelligence.

Computer programmes are typically defined as commands that always carry out the instructions of the programmer. The program's ability to solve problems will be limited if the programmer doesn't know how to do so in a given case. However, ANNs learn through examples and experiences rather than through programmes that give them predefined orders. It self-learns from examples and experiences, applying what it has learned when taking examinations. Computers will therefore be more effective if they can figure out how to address issues that humans are unable to. This aids ANN in scenarios involving pattern recognition and data classification.

What is Artificial Neural Network?

Deep learning techniques are based on neural networks, sometimes referred to as artificial neural networks (ANNs) or simulated neural networks (SNNs), which are a subset of machine learning. Their structure and nomenclature are modelled after the human brain, mirroring the communication between organic neurons.

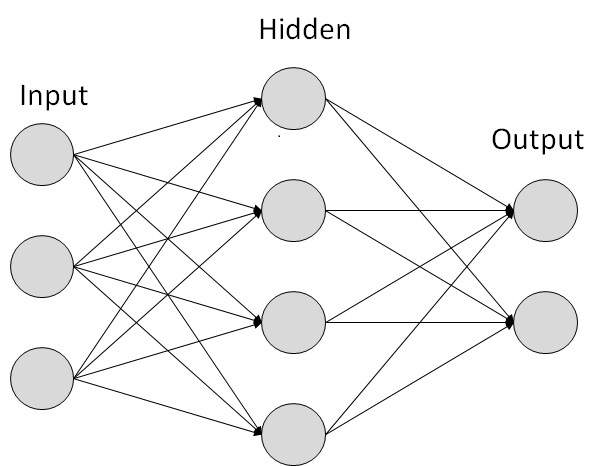

A node layer of an artificial neural network (ANN) consists of an input layer, one or more hidden layers, and an output layer. Each node, or artificial neuron, is connected to others and has a weight and threshold that go along with it. Any node whose output exceeds the defined threshold value is activated and begins providing data to the network's uppermost layer. Otherwise, no data is transmitted to the network's next tier.

Training data is essential for neural networks to develop and enhance their accuracy over time. However, these learning algorithms become effective tools in computer science and artificial intelligence once they are adjusted for accuracy, enabling us to quickly classify and cluster data. When compared to manual identification by human experts, tasks in speech recognition or picture recognition can be completed in minutes as opposed to hours. Google's search algorithm uses a neural network, one of the most well-known ones.

Crack your next tech interview with confidence!

Types of Artificial Neural Networks

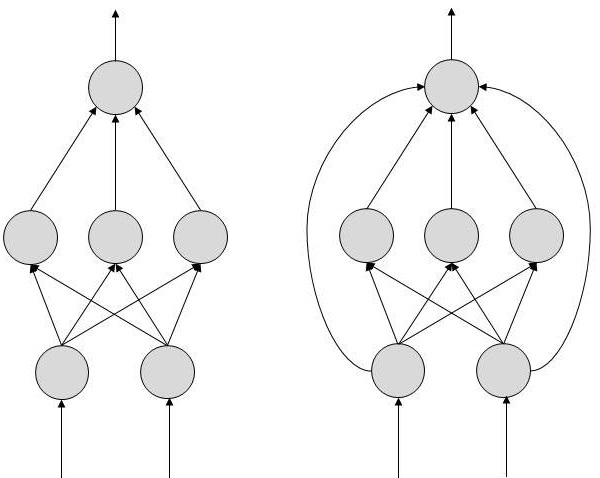

FeedForward and Feedback are the two topologies of artificial neural networks.

FeedForward ANN

The information flow in this ANN is unidirectional. A unit communicates with another unit it does not get information from. No feedback loops exist. They assist in the creation, recognition, and classification of patterns. Their inputs and outputs are fixed.

FeedBack ANN

Feedback loops are permitted here. In content addressable memories, they are employed.

Each arrow in the topology diagrams illustrates a link between two neurons and designates the information flow path. The signal between the two neurons is controlled by the weight of each link, which is an integer value.

There is no need to change the weights if the network produces an output that is "excellent or desired." But if the network produces a "bad or undesirable" output or an error, the algorithm modifies the weights to enhance subsequent outcomes.

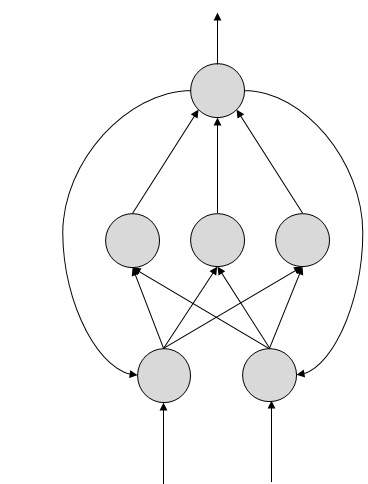

More complex: recurrent neural networks. They record the results of processing nodes and add them to the model. The model is said to learn to predict a layer's outcome in this way. In the RNN model, each node functions as a memory cell, continuing calculation and operation implementation. Similar to a feed-forward network, this neural network begins with front propagation and then remembers all processed data so it can repeat it in the future. When a network makes an inaccurate prediction, the system self-learns and keeps trying to make the right forecast through backpropagation. Text-to-speech conversions typically employ this kind of ANN.

Convolutional neural networks: One of the most often utilised models in use today is convolutional neural networks. This computational neural network model makes use of a multilayer perceptron variant and has one or more convolutional layers that can either be completely linked or pooled. These convolutional layers produce feature maps that capture a section of the image before it is eventually divided into rectangles and submitted for nonlinear analysis. The CNN model has been employed in many of the most cutting-edge AI applications, including facial recognition, text digitization, and natural language processing. It is particularly well-liked in the field of picture recognition. Other applications include signal processing, image categorization, and phrase identification.

Deconvolutional neural networks: Use a CNN model procedure that has been reversed for deconvolutional neural networks. They seek out features or signals that may have been lost or thought to be unimportant to the objective of the CNN system. The synthesis and analysis of images can be done using this network model.

Modular neural networks: Multiple neural networks that operate independently of one another make up modular neural networks. Throughout the calculation process, the networks are not in contact with one another or interfering with one another's operations. As a result, complicated or extensive computing procedures can be carried out more effectively.

Architecture of ANNs

We must comprehend what a conventional neural network consists of in order to comprehend the architecture of an artificial neural network. A vast number of artificial neurons, also known as units, are placed in a hierarchy of layers to represent a conventional neural network. This is why the phrase "artificial neural network" was coined. Let's examine the many layers that can be found in an artificial neural network:

Input Layer: The artificial neurons (also referred to as units) in the input layers are there to accept input from the outside environment. The network's true learning takes place here, or recognition, else it will process.

Output Layer: Units in the output layers react to the data input into the system and to whether it has learned any tasks or not.

Hidden Layer: Between the input layers and the output layers are where the hidden layers are described. A hidden layer's sole function is to turn the input into something useful that the output layer or unit may use.

The majority of artificial neural networks are linked, meaning that each of the hidden layers is directly connected to the neurons in both its input and output layers, leaving nothing hanging in the air. As a result, learning is made possible in its whole, and learning is also maximised when the weights inside the artificial neural network are modified after each iteration.

How Does A Neural Network Work?

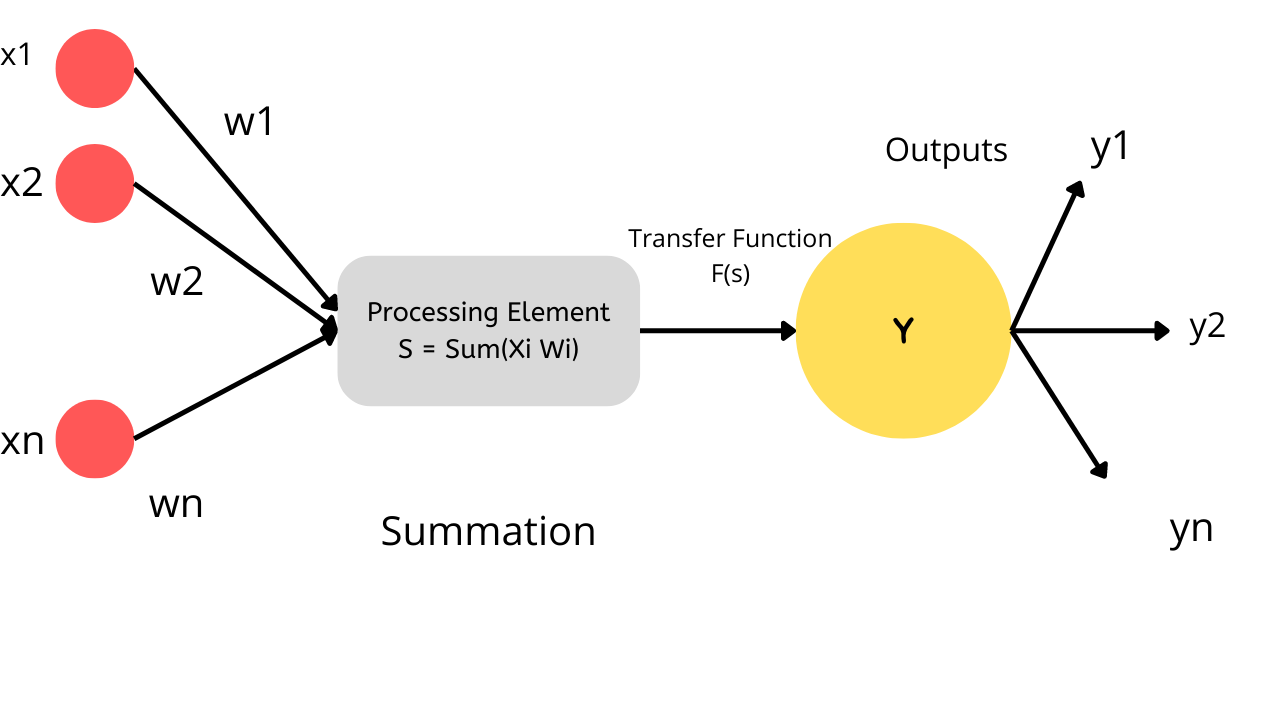

To comprehend neural networks, we must dissect them and comprehend a perceptron, which is a neural network's most fundamental building block.

How Do Perceptrons Work?

A single layer neural network called a perceptron is used to categorise linear data. It comprises four crucial parts:

Inputs

Weights and Bias

Summation Function

Activation or transformation Function

A perceptron's fundamental reasoning is as follows:

The inputs (x) from the input layer are multiplied by the weights (w) that are allocated to them. The weighted sum is created by adding the multiplied values.

The weighted sum of the inputs is then applied to a pertinent Activation Function, together with the corresponding weights. The activation function links each output's

corresponding input.

Deep Learning Weights and Biases

Why do we need to give each input a weight?

An input variable's weight is determined at random after it has been fed into the network. The importance of each input data point in predicting the outcome is indicated by its weight.

On the other hand, the bias parameter enables you to modify the activation function curve so that a precise result is obtained.

Summation Function: After giving each input a certain weight, the product of that input and weight is calculated. We obtain the Weighted Sum by adding each of these products. The summing function is responsible for this.

Activation Function: The mapping of the weighted total to the output is the primary goal of the activation functions. Transformation functions include activation functions like tanh, ReLU, sigmoid, and others.

Explanation with Example:

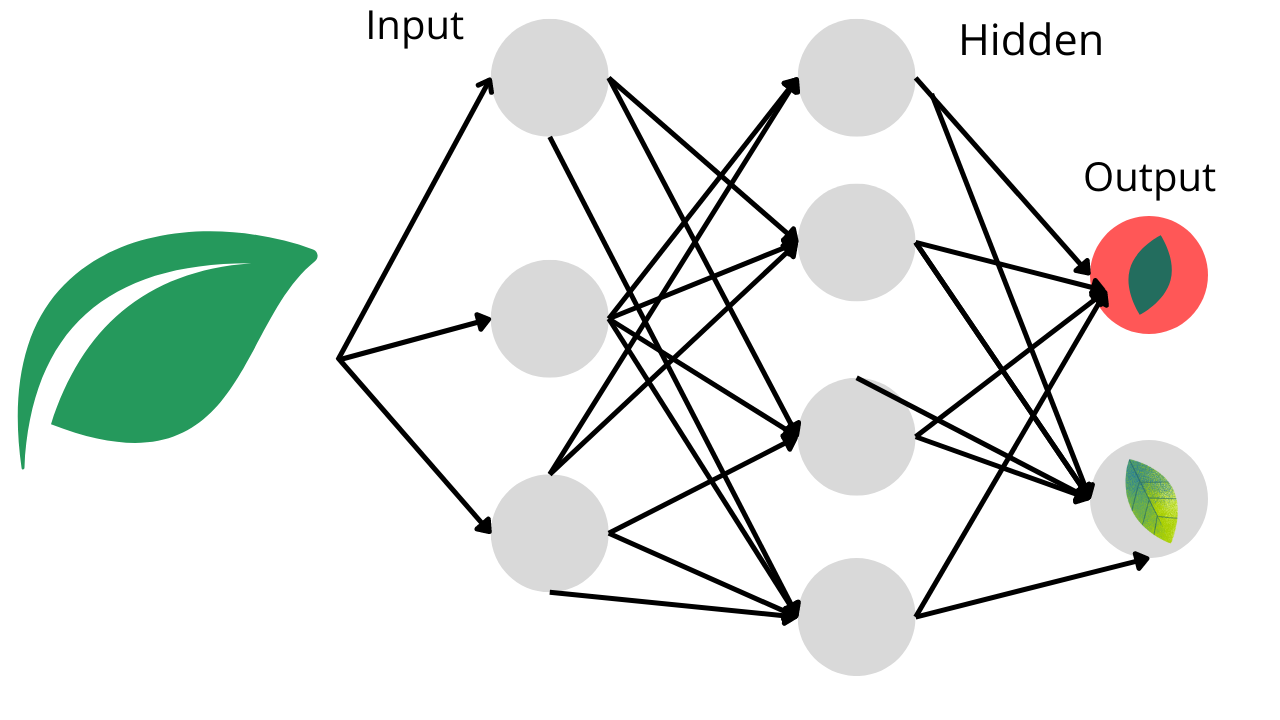

Imagine you are tasked with creating an Artificial Neural Network (ANN) that divides photographs into two categories:

Class A: Contains pictures of healthy leaves.

Class B: Featuring pictures of ill-looking leaves

So how do you build a neural network that separates crops with illness from those that don't?

The first step in any process is to prepare the input for processing by processing and converting it. According to the image's dimension in our scenario, each leaf image will be divided into pixels.

For instance, suppose the image has 900 pixels overall and is made up of 30 by 30 pixels. The input layer of the neural network receives these pixels' representations as matrices.

An artificial neural network (ANN) has perceptrons that accept inputs and process them by sending them from the input layer to the hidden layer, and then the output layer, just like our brains have neurons that assist in structuring and connecting thoughts.

Each input is given a starting random weight as it is transmitted from the input layer to the concealed layer. Following this, the inputs are multiplied by the relevant weights, and the sum is supplied as input to the following hidden layer.

Each perceptron in this situation has a bias value assigned to it, which corresponds to the weighting of each input. Additionally, each perceptron undergoes an activation or transformation function that decides whether or not it will be activated.

Data transmission to the following layer takes place via an active perceptron. The data is transmitted in this way via the neural network until the perceptrons get to the output layer (forward propagation).

The output layer determines whether the data belongs to class A or class B by deriving a probability.

Application of Neural Network

One of the earliest applications of neural networks was image identification, but the technique has since been effectively used in a wide range of other fields, such as:

⮞ Chatbots

⮞ Translation, language creation, and natural language processing

⮞ A stock market forecast

⮞ Route planning and optimization for delivery drivers

⮞ drug development and research

These are only a few of the various fields in which neural networks are now being used. Prime usage include any process that involves a lot of data and follows rigorous rules or patterns. The procedure is probably a top contender for automation with artificial neural networks if the amount of data involved is too enormous for a human to comprehend in a reasonable length of time.

Advantages of Neural Network

Artificial neural networks have several benefits, including:

Because of its capacity for parallel processing, the network can handle multiple tasks at once.

Not just a database, but the entire network, houses information.

It is possible to simulate the real-world linkages between input and output by learning and modelling complicated, nonlinear relationships.

Fault tolerance indicates that the creation of output won't cease if one or more ANN cells are corrupted.

Instead of an issue instantaneously ruining the network, gradual corruption means that the network will gradually deteriorate over time.

The ability to produce results with partial knowledge, with performance degradation depending on how crucial the missing information is. The input variables are not constrained in any way, including how they should be distributed.

Machine learning is the ability of the ANN to draw conclusions from events and act on them.

An ANN can better describe extremely variable data and non-constant variance because it can discover hidden correlations in the data without being given

a fixed relationship to command.

ANNs can forecast the results of unseen data due to their capacity to generalise and infer unknown associations on unknown data.

Disadvantages of ANNs

The following are some of ANNs' drawbacks:

The right artificial neural network architecture can only be established by trial & error and experience because there are no guidelines for selecting

the right network layout.

Hardware-dependent neural networks depend on processors with parallel processing capabilities.

Since the network relies on numerical data to function, all problems must first be converted into numerical values before being provided to the ANN.

One of the most significant drawbacks of ANNs is the absence of an explanation for probing solutions. Lack of understanding of the why or how behind the solution results in a lack of confidence in the network.

Deep Learning Vs Neural Networks

It can be misleading because the terms "deep learning" and "neural networks" are frequently used interchangeably in speech. It's important to remember that the "deep" in deep learning just denotes the number of layers in a neural network. A neural network with more than three layers, including the inputs and outputs, is referred to as a "deep learning algorithm." Simply put, a simple neural network is one that just contains two or three layers.

Good Luck & Happy Learning!!